CSC 2532 Winter 2024: Statistical Learning Theory

This course covers several topics in classical machine learning theory.

Topics may include: Asymptotic statistics, Uniform Convergence, Generalization, Complexity measures, Kernel Methods, Feature Learning, Neural Networks. More details can be found in syllabus. Please also sign up for Piazza.

This class requires a good informal knowledge of probability theory, linear algebra, real analysis (at least Masters level). Homework 0 is a good way to check your background.

Announcements:

- HW3 is out and due on 4/05 23:59, to be submitted through crowdmark. TA OHs TBD.

- Midterm logistics and practice questions can be found here.

- Project proposals are due on 3/9, 23:59. See below for instructions and list of suggested papers.

- HW2 is out and due on 2/19 23:59, to be submitted through crowdmark. TA OHs on 02/16 1pm @ Pratt 278.

- Lectures will be hybrid in room SU 255 and streamed over zoom.

- HW1 is out and due on 2/04 23:59, to be submitted through crowdmark. TA OHs on 02/02 1pm @ Pratt 278.

Instructors: Murat A. Erdogdu

- Email: csc2532prof@cs.toronto.edu

- Office hours: W 11:00-12:00 at Pratt 286b

Teaching Assistants: Mert Vural

- Email: csc2532tas@cs.toronto.edu

Time & Location:

| Section | Room | Lecture time | Zoom |

|---|---|---|---|

| L0101 | SU 255 | Th 16-18 | link |

Lectures and timeline

| Week | Topics and Lecture notes | Lectures | Timeline |

|---|---|---|---|

| 1 | Uniform convergence & Generalization | lecture 1 | syllabus |

| 2 | Covering with epsilon-nets | lecture 2 | |

| 3 | Symmetrization | lecture 3 | hw1 out |

| 4 | Rademacher complexity | lecture 4 | |

| 5 | Combinatorial Measures of Complexity | lecture 5 | hw1 due & hw2 out |

| 6 | Chaining | lecture 6 | |

| 7 | Reading week | hw2 due | |

| 8 | Kernel Methods I | lecture 7 | proposals due |

| 9 | Kernel Methods II | lecture 8 | |

| 10 | Midterm | ||

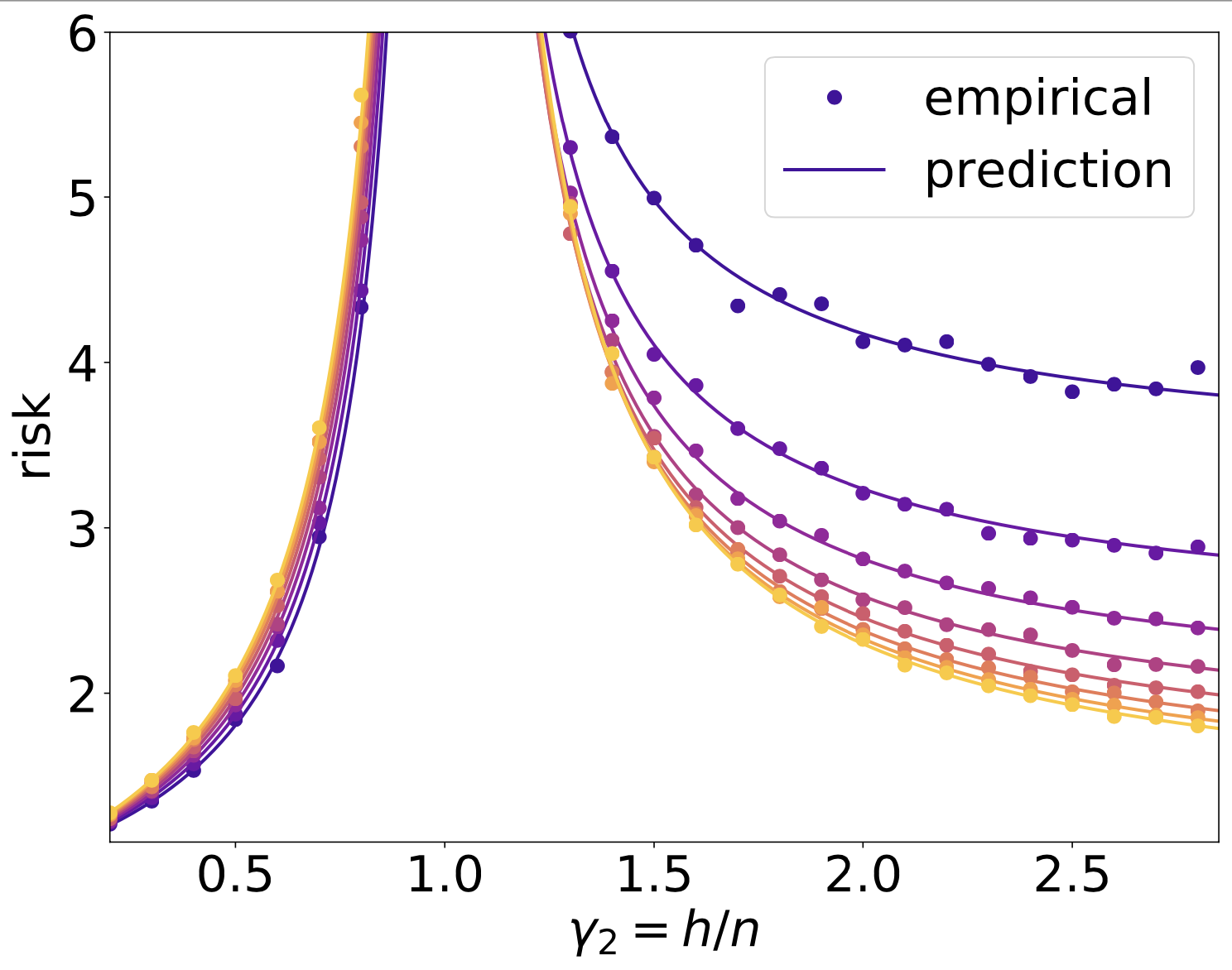

| 11 | Double-descent Risk Curves | lecture 9 | hw 3 out |

| 12 | Neural Networks: Linearization | lecture 10 | |

| 13 | Neural Networks: Feature Learning | lecture 11 | hw 3 due |

Homeworks

| Homework # | Out | Due | TA Office Hours |

|---|---|---|---|

| Homework 0 - V0 | 1/11 | - | - |

| Homework 1 - V0 | 1/22 | 2/04 23:59 | 02/02 1pm @ Pratt 278 |

| Homework 2 - V0 | 2/06 | 2/19 23:59 | 02/16 1pm @ Pratt 278 |

| Homework 3 - V0 | 3/20 | 4/05 23:59 |

Latex template can be found here.

Project

Final project should give you experience on carrying out theoretical research.

-

Option 1: Your project goal is to read and write a comprehensive review of a theoretical machine learning paper, and understand the main building blocks.

-

Option 2: You will conduct theoretical research that is relevant to this course. You will review relevant literature, find interesting research directions, and either develop novel methodology, or explain an observed behavior related to a learning algorithm.

Evaluation will be based on two reports:

-

Proposal (2 pts): 1/2 page, to be submitted on 3/09 23:59: the papers to be reviewed, and a brief summary of what paper is about, why it is relevant and interesting.

-

Final report (28 pts): 3 pages, to be submitted on 4/07: comprehensive review of the papers, key ideas/tools used in proofs, potential future directions, open problems.

You may work on the project alone or in a group of 2. Groups of 2 need to review 2 papers and the standards for a group project will be higher.

Project Inspiration: You can go through recent papers on COLT, NeurIPS, ICML, ICLR, JMLR to get project ideas and pick a paper to review.

Several research directions can be found here, but the list is by no means comprehensive.

Latex template for reports can be found here.

Computing Resources

For the homework assignments, we will use Python, and libraries such as NumPy, SciPy, and scikit-learn. You have two options:

- The easiest option is run everything on colab.

- Alternatively, you can install everything yourself on your own machine.

- If you don’t already have python, install using Anaconda.

- Use pip to install the required packages

pip install scipy numpy autograd matplotlib jupyter sklearn

- For those unfamiliar with Numpy, there are many good resources, e.g. Numpy tutorial and Numpy Quickstart.