STA 414/2104 Winter 2025:

Statistical Methods for Machine Learning II

This course introduces probabilistic learning tools such as exponential families, directed graphical models, Markov random fields, exact inference techniques, message passing, sampling and mcmc, hidden Markov models, variational inference, EM algorithm, Bayesian regression, probabilistic PCA, Neural networks kernel methods, Gaussian processes, variational autoencoders, and diffusion models. It will also offer a broad view of model-building and optimization techniques that are based on probabilistic building blocks which will serve as a foundation for more advanced machine learning courses.

More details can be found in syllabus and piazza.

Announcements:

- Final exam logistics, OHs information, and a practice final can be found here.

- Instructor OHs on Mar 31 will be held 4-5pm, same location.

- A4 is out, and due on 03/31 23:59. TA office hours are Mar 26 3-4pm, Mar 28 3:30-4:30pm, both at UY 9040.

- A3 is out, and due on 03/16 23:59. TA office hours are Mar 13 2-3pm on zoom, Mar14 2-3pm on zoom.

- Midterm logistics, TA OHs information, and a practice midterm can be found here.

- A2 is out, and due on 02/16 23:59 (You may submit up to 3 days late, without penalty). TA office hours are Feb 12 11am-12pm on zoom pass:A2 and Feb 13, 4-5pm at Hydro 9013.

- A1 is out, and due on 02/02 23:59. TA office hours are Jan 29, 10-11am on zoom pass:Bayes and Jan 30, 4-5pm at MY480.

- Lectures begin on Jan 6!

Instructors:

| Prof | Murat A. Erdogdu |

|---|---|

| sta414-2104prof@cs.toronto.edu | |

| Office hours | M 17-19 @FE 230 |

Teaching Assistants:

Yichen J., Alireza MH, Liam W., Weizheng Z.

- Email: sta414-2104ta@cs.toronto.edu

Time & Location:

| Section | Lecture / Tutorial |

|---|---|

| STA414 LEC0101 & STA2104 LEC0101 | M 14-17 @ FE 230 |

| STA414 LEC0501 & STA2104 LEC-X | T 18-21 @ MS 2170 |

Suggested Reading

No required textbooks. Suggested reading will be posted after each lecture (See lectures below).

- (PRML) Christopher M. Bishop (2006) Pattern Recognition and Machine Learning

- (MLPP) Kevin P. Murphy (2012), Machine Learning: A Probabilistic Perspective

- (PML1) Kevin P. Murphy (2022), Probabilistic Machine Learning: An Introduction

- (PML2) Kevin P. Murphy (2023), Probabilistic Machine Learning: Advanced topics

- (ITIL) David MacKay (2003) Information Theory, Inference, and Learning Algorithms

- (ESL) Trevor Hastie, Robert Tibshirani, Jerome Friedman (2009) The Elements of Statistical Learning

Lectures and timeline

| Week | Lectures | Suggested reading | Tutorials | Timeline | |

|---|---|---|---|---|---|

| 1 | Introduction Probabilistic Models |

MLPP 1 & 2 PRML 2.4 |

tut01 | syllabus | |

| 2 | Directed Graphical Models Markov Random Fields |

MLPP 19-19.5, 20.3 MLPP 10 |

tut02 | ||

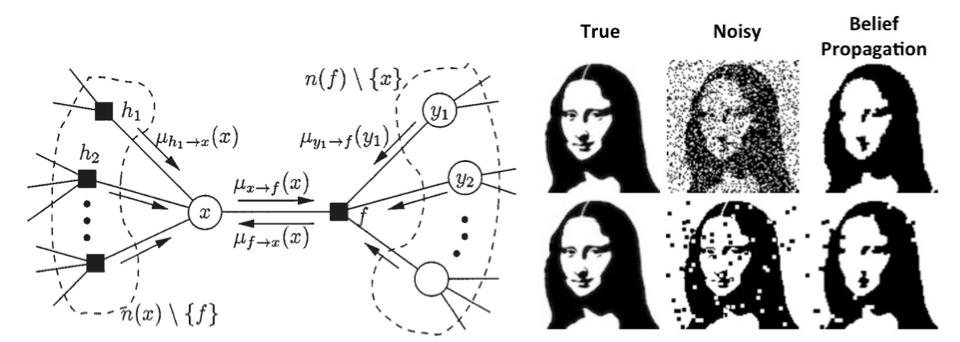

| 3 | Exact inference Approximate inference |

ITIL 21.1, 26 ITIL 29 |

tut03 | A1 out | |

| 4 | Message passing Decision theory |

MLPP 20.2,22.2 PRML 1.5 |

- | A1 due | |

| 5 | Sampling Algorithms | MLPP 17.2, 24.3 this paper |

colab demos true skill |

A2 out | |

| 6 | Neural Networks Variational inference I |

MLPP 17.3 MLPP 21.1-3 |

colab | A2 due | |

| 7 | Reading week (no class/tutorial) |

- | - | - | - |

| 8 | Midterm exam | midterm | |||

| 9 | Variational inference II Variational Autoencoders |

PRML 10.1-10.2 Blei’s notes |

colab | A3 out | |

| 10 | EM algorithm Bayesian regression |

PRML 9 PRML 12.2 |

- | A3 due | |

| 11 | Embeddings/Attention | - | - | A4 out | |

| 12 | Gaussian Processes Diffusion models |

CVPR tutorial this blog |

A4 due | ||

| 13 | Diffusion models II Final exam review |

Exam details |

Assignments

| Assignment # | Out | Due | TA Office Hours |

|---|---|---|---|

| Assignment 1 | 1/20 | 2/02 | Jan 29, 10-11am on zoom and Jan 30, 4-5pm at MY480 |

| Assignment 2 | 2/03 | 2/16 | Feb 12 11am-12pm on zoom and Feb 13, 4-5pm at Hyd9013 |

| Assignment 3 | 3/03 | 3/16 | Mar 13 2-3pm on zoom, Mar14 2-3pm on zoom |

| Assignment 4 | 3/18 | 3/31 | Mar 26 3-4pm, Mar 28 3:30-4:30pm, both at UY 9040 |

Computing Resources

For the assignments, we will use Python, and libraries such as NumPy, SciPy, and scikit-learn. You have two options:

- The easiest option is run everything on colab.

- Alternatively, you can install everything yourself on your own machine.

- If you don’t already have python, install using Anaconda.

- Use pip to install the required packages

pip install scipy numpy autograd matplotlib jupyter sklearn

- For those unfamiliar with Numpy, there are many good resources, e.g. Numpy tutorial and Numpy Quickstart.